Code Smells and Detection Techniques: A Survey

Design and code smells are characteristics in the software source code that might indicate a deeper design problem. Code smells can lead to costly maintenance and quality problems, to remove these code smells, the software engineers should follow the best practices, which are the set of correct techniques which improve the software quality. Refactoring is an adequate technique to fix code smells, software refactoring modifies the internal code structure without changing its functionality and suggests the best redesign changes to be performed. Developers who apply correct refactoring sequences

Building large arabic multi-domain resources for sentiment analysis

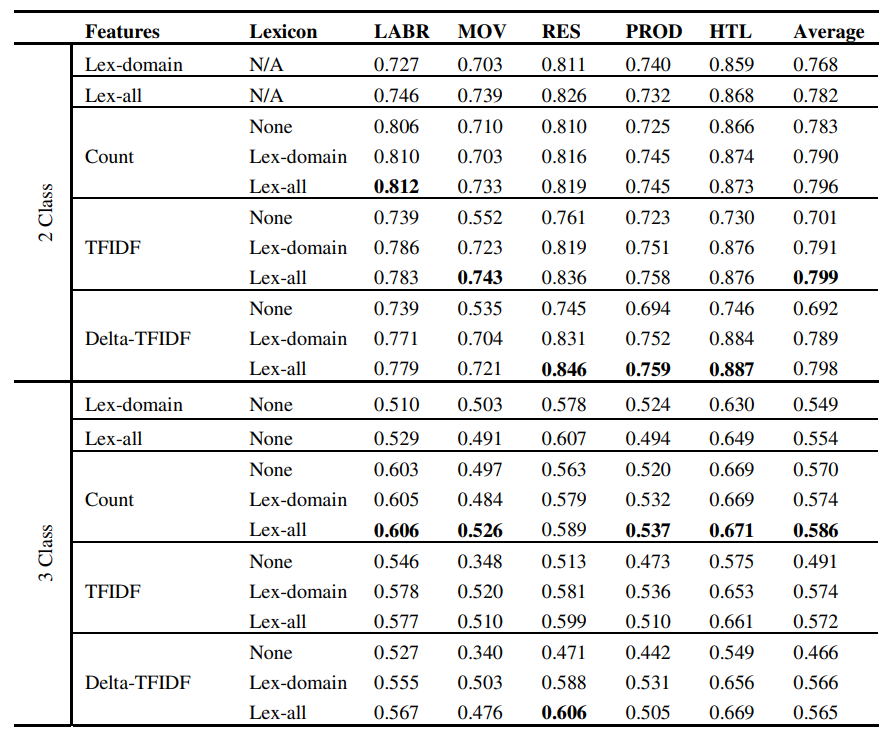

While there has been a recent progress in the area of Arabic SentimentAnalysis, most of the resources in this area are either of limited size, domainspecific or not publicly available. In this paper, we address this problemby generating large multi-domain datasets for Sentiment Analysis in Arabic.The datasets were scrapped from different reviewing websites and consist of atotal of 33K annotated reviews for movies, hotels, restaurants and products.Moreover we build multi-domain lexicons from the generated datasets. Differentexperiments have been carried out to validate the usefulness of the

Complementary feature splits for co-training

In many data mining and machine learning applications, data may be easy to collect. However, labeling the data is often expensive, time consuming or difficult. Such applications give rise to semi-supervised learning techniques that combine the use of labelled and unlabelled data. Co-training is a popular semi-supervised learning algorithm that depends on splitting the features of a data set into two redundant and independent views. In many cases however such sets of features are not naturally present in the data or are unknown. In this paper we test feature splitting methods based on

Exploiting neural networks to enhance trend forecasting for hotels reservations

Hotel revenue management is perceived as a managerial tool for room revenue maximization. A typical revenue management system contains two main components: Forecasting and Optimization. A forecasting component that gives accurate forecasts is a cornerstone in any revenue management system. It simply draws a good picture for the future demand. The output of the forecast component is then used for optimization and allocation in such a way that maximizes revenue. This shows how it is important to have a reliable and precise forecasting system. Neural Networks have been successful in forecasting

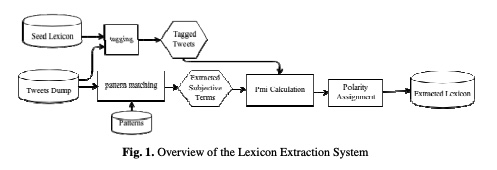

A fully automated approach for Arabic slang lexicon extraction from microblogs

With the rapid increase in the volume of Arabic opinionated posts on different social media forums, comes an increased demand for Arabic sentiment analysis tools and resources. Social media posts, especially those made by the younger generation, are usually written using colloquial Arabic and include a lot of slang, many of which evolves over time. While some work has been carried out to build modern standard Arabic sentiment lexicons, these need to be supplemented with dialectical terms and continuously updated with slang. This paper proposes a fully automated approach for building a

AraVec: A set of Arabic Word Embedding Models for use in Arabic NLP

Advancements in neural networks have led to developments in fields like computer vision, speech recognition and natural language processing (NLP). One of the most influential recent developments in NLP is the use of word embeddings, where words are represented as vectors in a continuous space, capturing many syntactic and semantic relations among them. AraVec is a pre-Trained distributed word representation (word embedding) open source project which aims to provide the Arabic NLP research community with free to use and powerful word embedding models. The first version of AraVec provides six

Investigating analysis of speech content through text classification

The field of Text Mining has evolved over the past years to analyze textual resources. However, it can be used in several other applications. In this research, we are particularly interested in performing text mining techniques on audio materials after translating them into texts in order to detect the speakers' emotions. We describe our overall methodology and present our experimental results. In particular, we focus on the different features selection and classification methods used. Our results show interesting conclusions opening up new horizons in the field, and suggest an emergence of

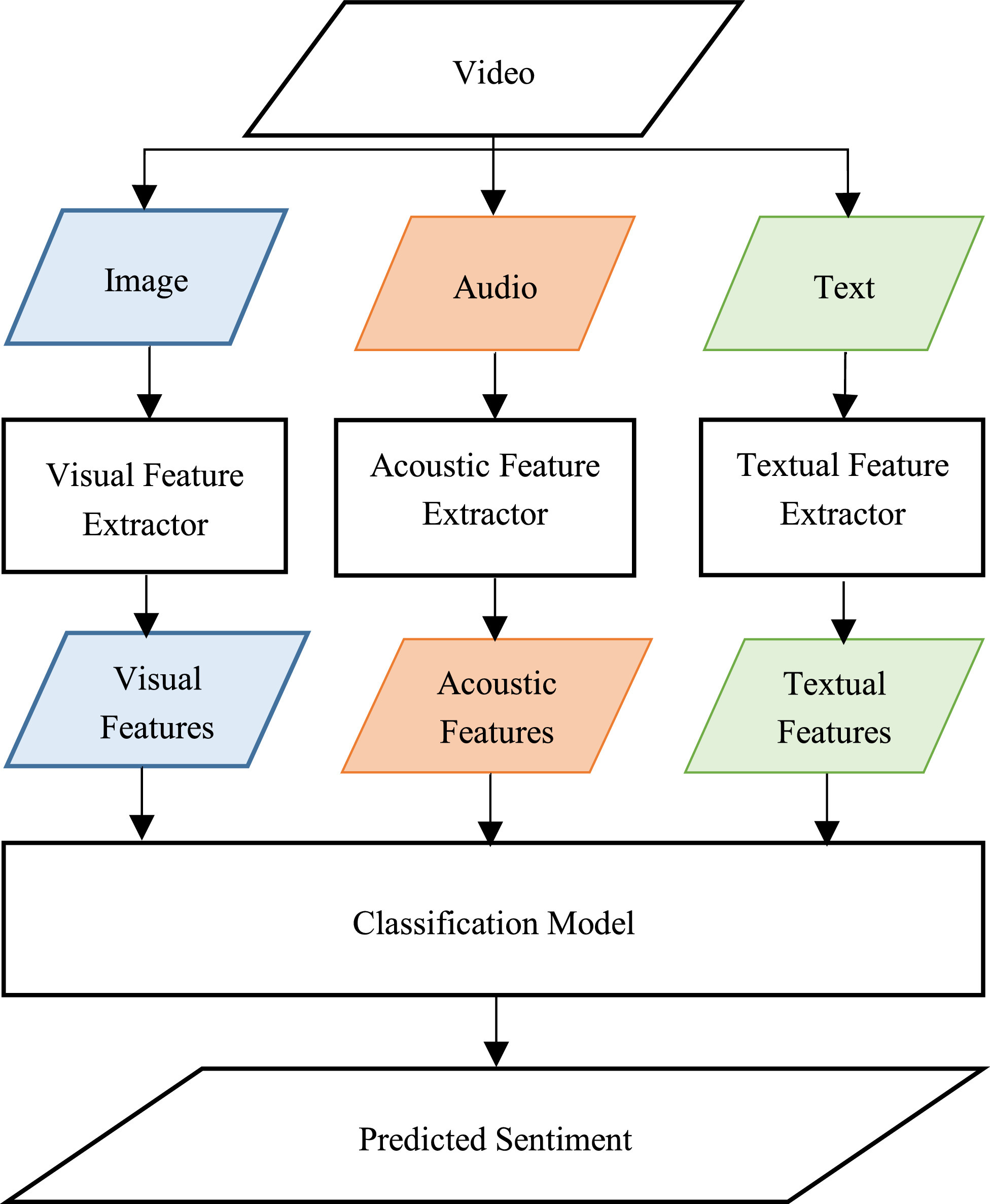

Multimodal Video Sentiment Analysis Using Deep Learning Approaches, a Survey

Deep learning has emerged as a powerful machine learning technique to employ in multimodal sentiment analysis tasks. In the recent years, many deep learning models and various algorithms have been proposed in the field of multimodal sentiment analysis which urges the need to have survey papers that summarize the recent research trends and directions. This survey paper tackles a comprehensive overview of the latest updates in this field. We present a sophisticated categorization of thirty-five state-of-the-art models, which have recently been proposed in video sentiment analysis field, into

Automated cardiac-tissue identification in composite strain-encoded (C-SECN) images using fuzzy K-means and bayesian classifier

Composite Strain Encoding (C-SENC) is an MRI acquisition technique for simultaneous acquisition of cardiac tissue viability and contractility images. It combines the use of black-blood delayed-enhancement imaging to identify the infracted (dead) tissue inside the heart wall muscle and the ability to image myocardial deformation (MI) from the strain-encoding (SENC) imaging technique. In this work, we propose an automatic image processing technique to identify the different heart tissues. This provides physicians with a better clinical decision-making tool in patients with myocardial infarction

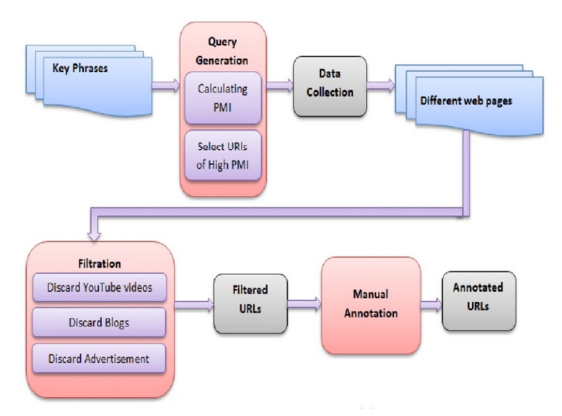

A system for assessing the quality of Web pages

The World Wide Web has brought about an unprecedented explosion in the amount of information available on-line, largely in the form of Web pages. The fact that anyone can publish anything has ultimately led to pages with varying degrees of quality. This paper aims at investigating means for assessing the quality of a random web page and provides a quantitative approach for selecting high quality-content pages. The work was motivated by the need to locate pages that may be considered as candidates for translation. © 2013 IEEE.

Pagination

- Previous page ‹‹

- Page 28

- Next page ››